Re-watch this meetup:

Technology is not neutral. Instead, it is a reflection of our cultural and political beliefs, which includes prejudices, inequalities and racism. It is therefore important to ask questions about who is in control. And what influence does this have on the inclusiveness of the (digital) public domain?

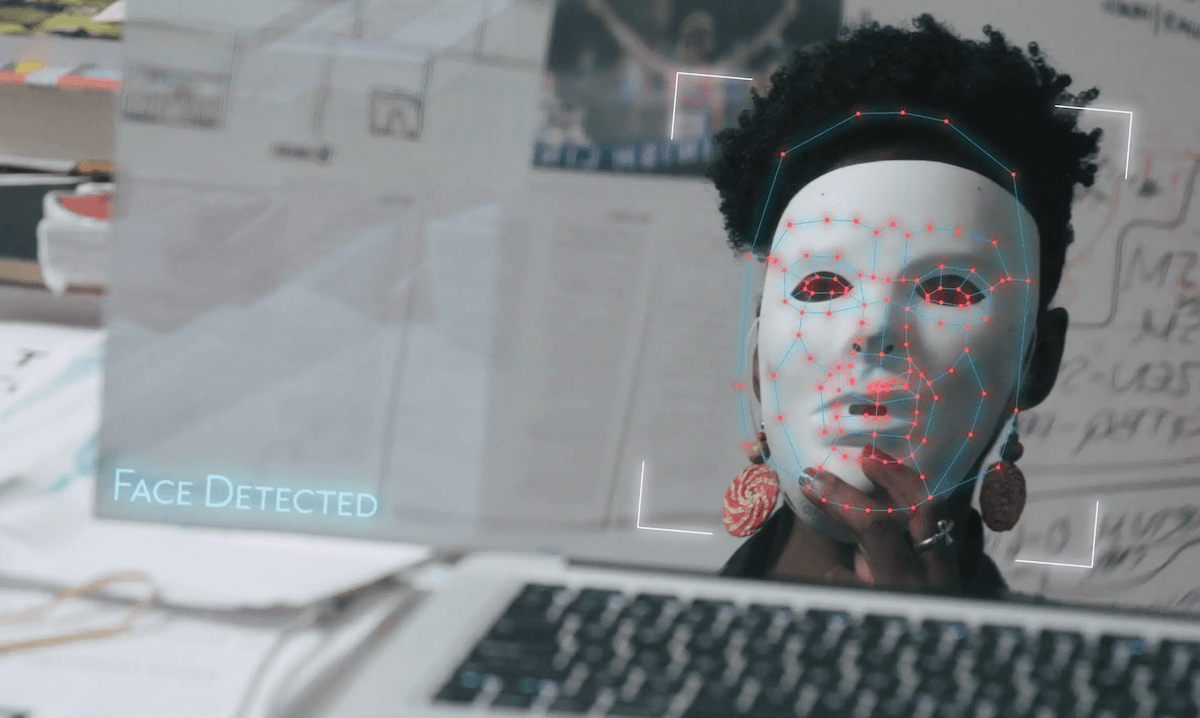

In today’s world, AI systems are used to decide who gets hired, the quality of medical treatment we receive, and whether we become a suspect in a police investigation. While these tools show great promise, they can also harm vulnerable and marginalized people, and threaten civil rights. Unchecked, unregulated and, at times, unwanted, AI systems can amplify racism, sexism, ableism, and other forms of discrimination.

> Read more: The Algorithmic Justice League

Waag is hosting the screening of the film Coded Bias, to be viewed through Cinesend from 15 to 22 October. Coded Bias highlights the stories of people who have been impacted by harmful technology and shows pioneering women sounding the alarm about the threats posed by artificial intelligence to civil rights. Central to the documentary is the story of Joy Buolamwini, researcher at MIT, who discovered that most facial recognition technologies do not respond to her face due to the colour of her skin. Coded Bias premiered at the Sundance Film Festival in January 2020.

About Coded Bias

Synposis: 'Modern society sits at the intersection of two crucial questions: what does it mean when artificial intelligence increasingly governs our liberties? And what are the consequences for the people AI is biased against? When MIT Media Lab researcher Joy Buolamwini discovers that most facial-recognition software does not accurately identify darker-skinned faces and the faces of women, she delves into an investigation of widespread bias in algorithms. As it turns out, artificial intelligence is not neutral, and women are leading the charge to ensure our civil rights are protected.'

Biased algorithms are a concrete and alarming example of exclusion, facilitated by technology. The screening of Coded Bias allows us to continue the conversation about ethics in AI, and to reflect on the role of AI in our future. From 15 to 22 October, attendees will be able to view the Coded Bias documentary through Cinesend. The link to the film will be sent to you after registration. Then, during our public program on Thursday 22 October, we will discuss ethics in AI with the film maker, Shalini Kantayya. After a short introduction of the film, Waag’s director Marleen Stikker will enter into conversation with Kantayya. After this, she will moderate the Q&A with the audience. We would love to hear from you too! What do you think about our (digital) society? What can we do to protect our civil rights?

Please note: to watch Coded Bias through Cinesend with us, your location must be in the Netherlands. The Q&A on Thursday 22 October will NOT include a screening of the film. The film is in English and will not come with subtitles.

Program

15-22 October – attendees (located in the Netherlands) enabled to watch Coded Bias through Cinesend

Thursday 22 October

20:00-20:15 hrs – Introduction of Coded Bias documentary

20:15-20:30 hrs – Filmmaker Shalini Kantayya in conversation with Marleen Stikker

20:30-21:30 hrs – Discussion and Q&A with audience

Trailer