How can you place virtual objects in real spaces? For the Mixed Reality @ Oude Kerk project we explore, among others, how we can add virtual objects to reality. At the Oude Kerk we experiment with virtual apostle statues. These statues were once lost during the iconoclasm. For this we use a Hololens, mixed reality glasses with which you can see objects in 3-D. Unlike virtual reality, the Hololens adds the virtual objects to the real space. Through spatial recognition of the glasses the objects remain in place and in the right perspective as the user moves.

How does this spatial recognition work?

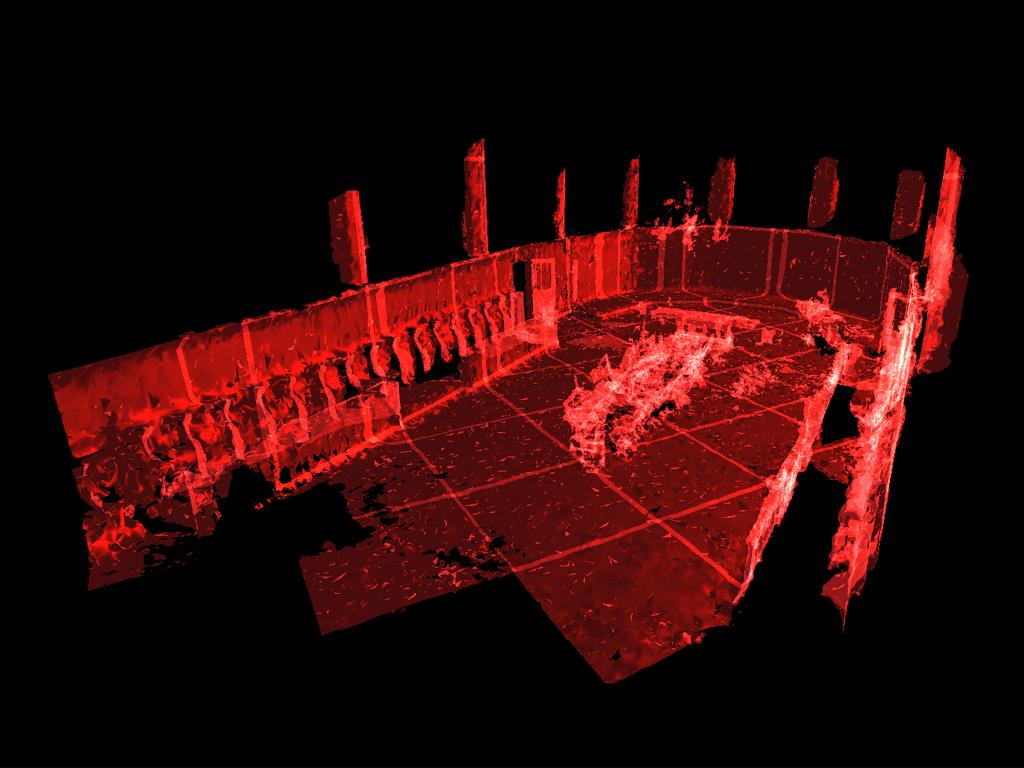

Because we want to give the virtual objects a precise location in the church, we have to first scan the part of the church we want to use. The technique we use for this, called “spatial mapping”, is part of the MixedRealityToolkit (an open-source framework for the development of MR applications). While the user of the Hololens looks around, the glasses build a 3-D model of the space. The spatial sensor in the glasses measures the distance to walls, floors and objects. To complete the model it helps to get closer to the surfaces of the "scanned" space. For the high pillars for example, we needed stairs to get close enough for scanning. [filmpje] While wearing the glasses, the user can see the realisation of the constructed model "live" as a sort of blanket covering the real space. The model is continuously adapted as more space is mapped. Due to this direct feedback the user can directly see where the model still has "holes". These can then be filled by looking at the space again or from a different angle.

When the Hololens has a good model of the space, it can recognise its own location using geometrical data. This means that it is possible to "anchor" virtual objects in the space so they will appear at the same place every time. This “spatial anchor” is actually a set of landmarks in the model. To anchor an object the Hololens uses the viewing direction of the user. The user sees a cursor projected on an object when it is straight in front of them. Then the user can select the object with the cursor on it with an "airtap" (a hand gesture). For our application, we make the object movable with that action and then it can be moved in the same way as the cursor, by changing the viewing direction. Finally, this 3-D variant of a "drag and drop" action is finished with a second "airtap".

In our next blog, we will share more about the first Mixed Reality demo we’ve organised for visitors of Oude Kerk.